Beyond Model Wars: Why Context and Integration Are the Real AI Battlegrounds

In the tech community, there's endless chatter about which AI model is fastest, cheapest, or most accurate. While OpenAI, Anthropic, Google, and others duke it out over parameters and benchmarks, I believe we're missing the real revolution happening right under our noses. The next frontier in AI isn't just about raw model power—it's about context length and meaningful integration.

The Context Revolution: Memory That Matters

When we talk about context in AI, we're really talking about memory and understanding—not just processing power. Current AI models are like brilliant amnesiacs; they can solve complex problems but forget the conversation as soon as it exceeds their context window.

True contextual AI can maintain the thread of understanding across hours, days, or even weeks of interaction. This isn't just convenient—it's transformative:

Why Long Context Changes Everything

- Institutional Knowledge Retention: Imagine an AI that can ingest your entire codebase, documentation, and Slack history, then use that context to help new team members get up to speed or solve bugs with full awareness of why decisions were made years ago.

- Nuanced Understanding Over Time: For creative work, an AI that remembers the evolution of your project—the directions you tried and abandoned, the feedback you've received—becomes a true collaborator rather than just a tool.

- Relationship Building: We build trust with people over repeated interactions where context is preserved. AI that maintains memory of previous conversations can develop a genuine understanding of your preferences, communication style, and needs.

- Complex Problem Solving: Many real-world problems can't be solved in a single prompt. They require exploration, iteration, and building on previous insights—all impossible without substantial context length.

The companies that solve for context will unlock use cases we haven't even imagined yet. Think beyond the current 100K token windows to systems that can hold entire books, codebases, or relationship histories in mind while having a conversation.

Integration: Where AI Meets Reality

The second frontier—perhaps even more important than context—is meaningful integration. The future belongs to AI that doesn't just live in a chat interface but weaves itself into the tools we already use.

True Integration Is:

- Invisible: The best integrations don't announce themselves; they reduce friction in existing workflows. Users shouldn't have to context-switch to a separate AI tool.

- Bidirectional: Not just pushing information into systems, but pulling relevant context out at the right moment. AI should read from and write to your existing data sources.

- Actionable: Moving beyond suggestions to actually executing or automating tasks across platforms—scheduling that meeting, updating that document, committing that code.

- Contextually Aware: Understanding not just the request but where it fits in your broader workflow and ecosystem of tools.

The Integration Gap

Most current AI applications fall dramatically short on integration. They exist as islands—powerful but disconnected from where work actually happens. This creates cognitive overhead: users must manually move information between systems, translate AI outputs into actions, and maintain context themselves.

Companies building for true integration are tackling thorny problems like:

- Real-time synchronization across multiple data sources

- Permission and security models that allow appropriate access

- Standardized APIs that enable consistent behavior across tools

- User interfaces that blend AI assistance naturally into existing workflows

From Theory to Practice

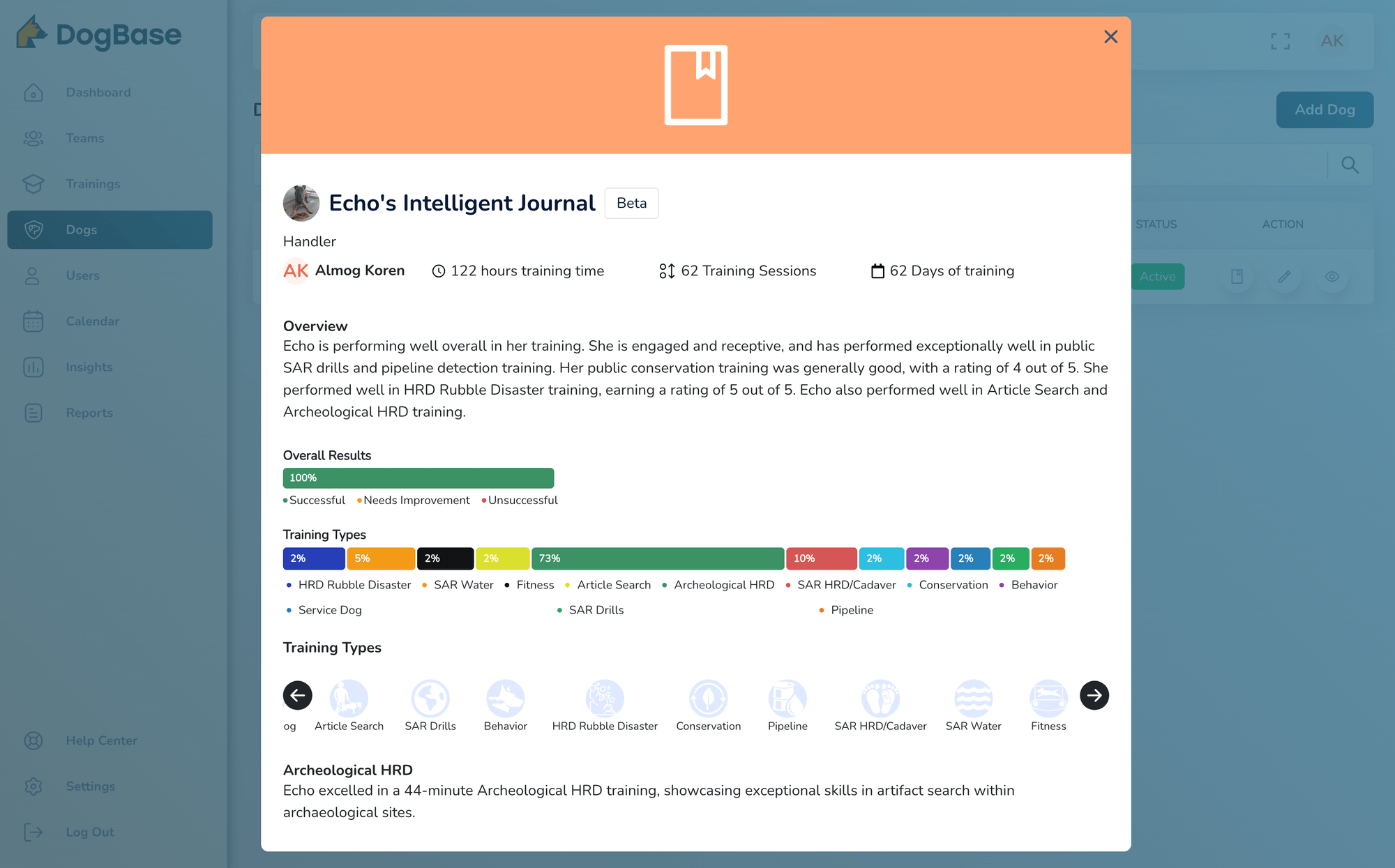

Our work at DogBase provides just one example of these principles in action. For dog handlers and trainers, we're creating systems where AI doesn't just generate text about dog training—it understands the complete context of a dog's history and seamlessly integrates with training logs, certification requirements, and performance metrics.

This is just a small illustration of the broader shift happening. Across industries, the winners won't be those with marginally better language models, but those who solve the harder problems of context persistence and meaningful integration.

Building the Contextual, Integrated Future

For startups and developers looking to make an impact in AI, focus less on competing with the giants on raw model capabilities. Instead:

- Identify workflows where contextual memory creates exponential value

- Find integration points where AI can remove friction rather than adding new steps

- Build domain-specific applications where deep understanding of context matters more than general intelligence

- Design for persistent value over time, not just one-off interactions

Looking Ahead

The AI landscape is evolving rapidly, but the direction is clear. As base models continue to commoditize, the real differentiation will come from how we apply these tools in context-rich, deeply integrated ways that solve genuine human problems.

If you're interested in exploring these ideas further, I'll be discussing practical applications of context-rich, integrated AI at Startup Yale 2025 during my talk "Navigating the AI Revolution: Practical Applications for Student Entrepreneurs."

The future belongs not to those who build the biggest models, but to those who apply AI within the right context and integrate it in ways that genuinely help people achieve their goals.

Almog Koren is the founder of DogBase and Almog R&D Ltd. He'll be speaking at Startup Yale 2025 on AI applications for student entrepreneurs.